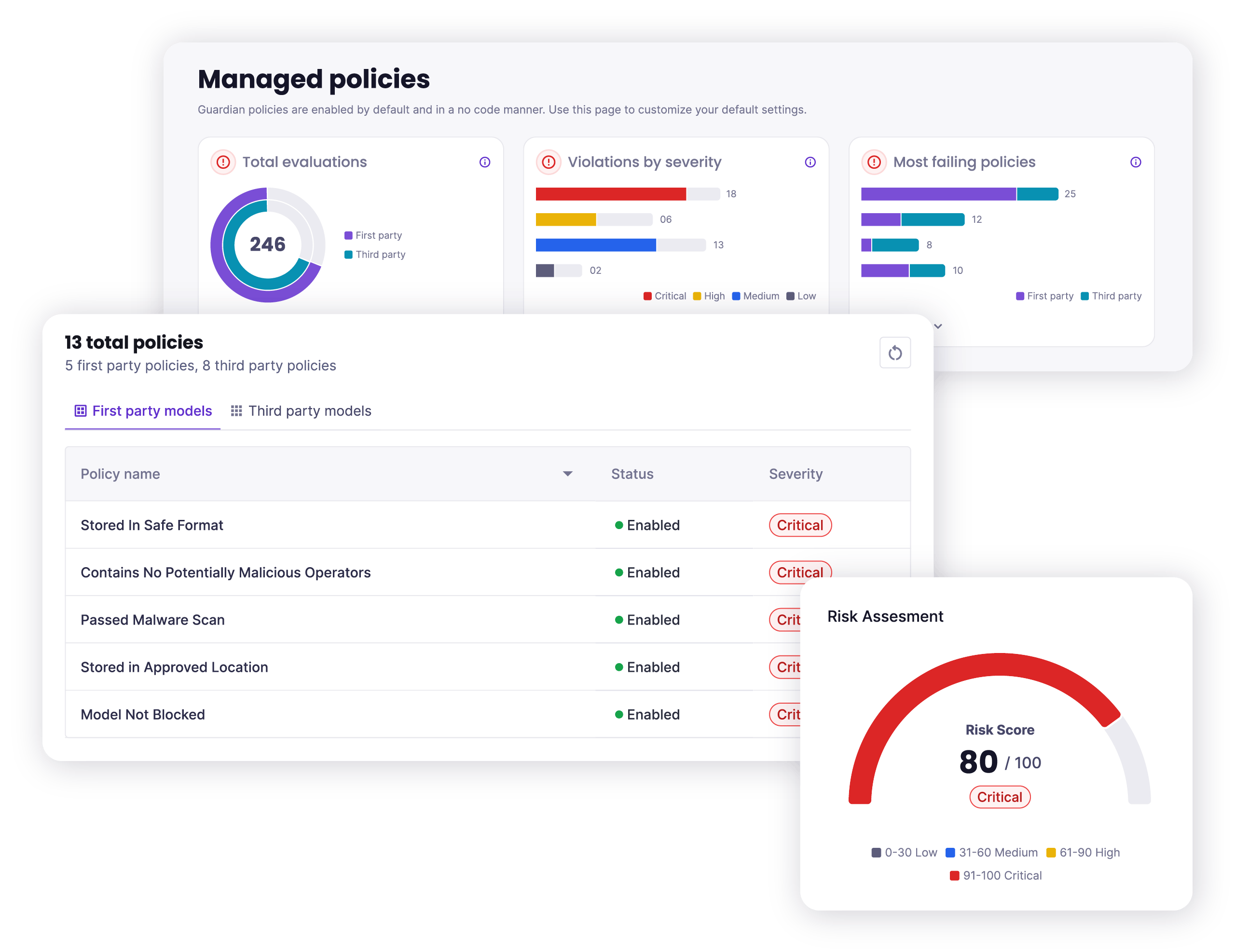

The Platform

for AI Security

Protect AI is the broadest and most comprehensive AI security solution. Our suite of products—Guardian, Recon, and Layer—operate on a single, unified platform and secure AI applications from model selection and testing to runtime and beyond.

Fortune Cyber 60

Award 2023

CB Insights AI 100 2024

Inc. Best in Business 2024

Global InfoSec Awards

Winner 2024

SINET16 Innovator

Award 2024

Inc. Best Workplaces 2024

Enterprise Security Tech Cyber Top Company 2024

Product 50 Awards Best Product Leader 2025

Making AI Safe at Enterprise Scale

Top Tier AI Security Products

Protect AI’s suite of products deliver security, end to end. From model selection and import to red teaming and testing, through to deployment and runtime monitoring, we’ve got you covered every step of the way. Protect AI has the most advanced AI security product suite on the market.

A Platform Built for Scale

Your security needs to run at the speed and scale of AI. With flexible deployment options, modular architecture, and easy integrations, Protect AI fits into any environment and evolves to meet your needs, both the expected and the unexpected.

Unrivaled Threat Research

Every product from Protect AI is fueled by unparalleled access to threat research and awareness. Backed by 17k+ security researchers from the huntr community, and in partnership with Hugging Face, our first- and third-party threat research feeds our products so teams can stay ahead of attackers.

End-to-End Security for

Your AI Applications

Protect AI

by the Numbers

Partnering with Industry Leaders

We work with the best of the best, creating a powerful ecosystem of partners thatshare our commitment to securing AI innovation.